Was there ever a market for just five computers, and is there now?

By Adam Tamburini, International SVP at e-shelter

According to a quote attributed to the then CEO of IBM, Thomas J Watson Jr, in 1943 he believed that ‘…there is a world market for about five computers.’ In fact, Watson may never have said anything of the kind, but that’s a different story. The fact remains, this is often quoted as an early prediction for technology and computing. But has it come true?

A famous quote attributed to the then CEO of IBM, Thomas J Watson Jr, in 1943 reportedly says he believed that there was a world market for about five computers Click To TweetObviously, there are now many more than five computers, and equally obviously, the sheer volume of modern devices is something that nobody foresaw in 1943. Indeed, the belief that personal computers would never catch on in a big way, and that computing would remain primarily a business and political tool, persisted for several decades after that. So, if we are to take Watson’s words literally and assume he was using the term ‘computers’ in the sense that we use it today, then of course he was wrong.

The belief that personal computers would never catch on in a big way, and that computing would remain primarily a business and political tool persisted for several decades Click To Tweet

Or was he?

The early 1940s were crucial to computing, and the decade saw many innovations, frequently driven by the demands of war. The first example of remote access computing occurred in 1940 with a demonstration in New York of the Complex Number Calculator, and this was followed a year later by Germany’s development of the Z3, the world’s first programmable, automatic computing device. Also in 1941, the UK debuted the Bombe, a computer designed by Alan Turing to decrypt Axis communications during World War II.

The world had to wait until the early 1970s for anything that resembled a personal computing device of the type we use today Click To TweetA number of further machines, that might loosely be called ‘computers’, followed. But unlike today’s computers, almost all of them were so massive they required a room to themselves, and almost all were designed to oversee and manage large-scale operations of some kind. The world had to wait until the early 1970s for anything that resembled a personal computing device of the type we use today.

So, to put Watson’s ‘quote’ into context, perhaps he foresaw a future where only five large-scale computing applications were required, to handle large-scale data and functionality of national and international importance. And if that is the case, perhaps he was closer to the truth than has been acknowledged.

To put Watson’s ‘quote’ into context, perhaps he foresaw a future where only five large-scale computing applications were required Click To TweetBack to the mainframe

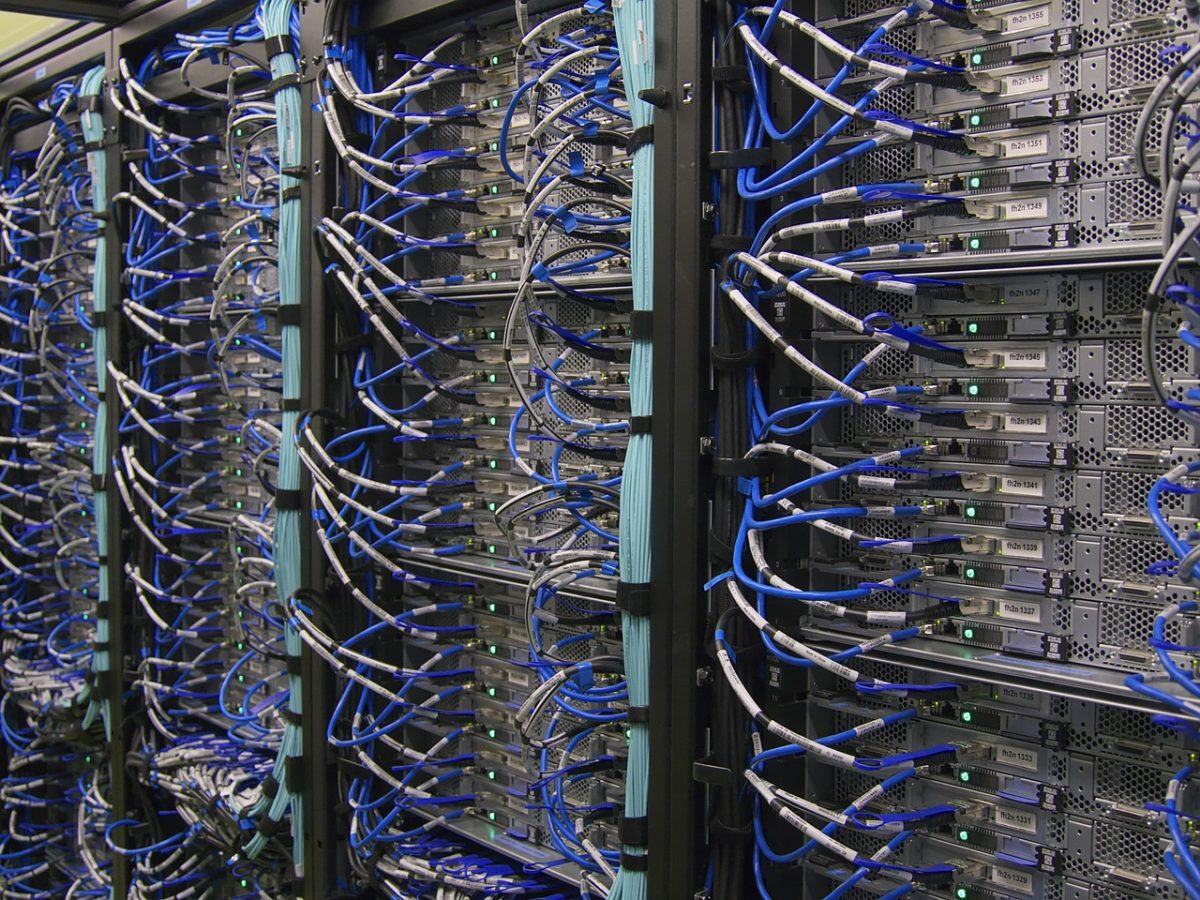

For in many ways, even though there are so many applications, devices and uses of what might broadly be termed ‘computers’ now, in broad terms the world is returning to the old habits of having a ‘mainframe’ system, a large-scale, central repository of processing power and data handling capability. Only today, we call them ‘data centres’, ‘large scale IT service providers’ or even ‘the cloud’.

If the period between the 1980s and 2010s saw the explosion of home computing, the Internet and technology in general, it could be argued that the world has grown beyond that, now. The Internet is so vast, so flexible and full of potential, so socially and politically important and omnipresent, it has taken on an identity of its own. The idea that it can be conceived of as merely a series of computers talking to each other, seems faintly ridiculous. The world of technology has become bigger than was ever predicted, the volume of data vaster, and we need bigger concepts to capture these phenomena.

The world of technology has become bigger than was ever predicted, the volume of data vaster, and we need bigger concepts to capture them Click To TweetOne of the biggest of these big concepts is cloud computing, which has evolved partly in response to the proliferation of technology and associated data. In one sense, the cloud in all its forms is the modern mainframe, albeit a vast, behemoth, super-mainframe. The sheer volume of data held in the cloud is mind-boggling, and more is being added daily as businesses and individuals migrate their data and applications.

We're returning to the old habits of having a mainframe system, a large-scale, central repository of processing power and data handling capability. Only today, we call them data centres or the cloud Click To TweetWho, and where, is the cloud?

The cloud, of course, comprises of organised provision, usually in the form of servers, and usually housed in a data centre. Are the data centres, therefore, actually the mainframes of today? Are these the super-computers that Watson foresaw?

Alternatively, perhaps we should look to the biggest of the providers to fill this role. The hyperscale cloud providers have brought cloud computing to the masses (quite literally), through provision of a range of user-friendly, easily accessible services that suit everybody from the teenager with an android phone who likes to edit their selfies, to the largest of businesses requiring dedicated server space, assured regulatory compliance and management. Are the only five ‘computers’ that the world ultimately needs, to be found among these companies?

In one sense, the cloud in all its forms is the modern mainframe, albeit a vast, behemoth, super-mainframe Click To TweetOf course, which, if any, of these answers you choose depends largely on semantics and interpretation. The world of technology has changed so vastly since 1943 that it would be astounding if anybody could have predicted the current, cloud-based, world.

But there really is no doubt that the world is moving back towards centrally-managed provision in computing, simply because the volume of data, and potential for its application, is so great. What is more, big data is not the only driving force. Artificial Intelligence, machine learning, all of the great events that lie just over the horizon are just as dependent upon well-organised data provision as the smallest of businesses, and it’s hard to imagine a time when this will not be the case.

Artificial Intelligence, machine learning, all of the great events that lie just over the horizon are just as dependent upon well-organised data provision as the smallest of businesses Click To TweetWhether this is anywhere close to what Watson had in mind, of course, is another question entirely…

For companies looking to get into Immersive technologies such as VR/AR/MR/XR our Virtual Reality Consultancy services offer guidance and support on how best to incorporate these into your brand strategy.

Alice Bonasio is a VR and Digital Transformation Consultant and Tech Trends’ Editor in Chief. She also regularly writes for Fast Company, Ars Technica, Quartz, Wired and others. Connect with her on LinkedIn and follow @alicebonasio on Twitter.