At #MSBuild2017 Microsoft’s Alex Kipman defined the company’s Mixed Reality vision for developing what he called the “Virtual Interaction Spectrum”

I first got in touch with Alex Kipman back in 2008, when I was writing an article for a gaming magazine about a secretively code-named “Project Natal,” which later became the Kinect motion sensing system for the Xbox 360. For me, it was interesting to see how this rising star at Microsoft – a Brazilian expat like myself – was finding new and intuitive ways for humans and machines to interact. And although the focus of that technology was ostensibly on gaming, one couldn’t fail to spot broader potential applications down the line if they pulled this off.

Fast-forward to Microsoft Build 2017, and Kipman is something of a rock star figure, cheered enthusiastically as he took to the main stage for his day 2 keynote on Mixed Reality. Because if Microsoft now believes that MR represents “the new frontier of computing”, it’s in large part down to him pushing and developing that vision over the past decade. He is ultimately working towards a world where we can interact with computers in as natural a way as possible, and all devices eventually become lenses.

The terms Mixed Reality is often dismissed as a marketing ploy Click To TweetIn his keynote Kipman also took the opportunity to address the sometimes controversial terminology surrounding that vision, referring to a “Mixed Reality Spectrum”, which goes all the way from simpler forms of augmented reality (think Pokémon Go) to fully immersive virtual reality to 3-D interactive holograms. It boils down to thinking about the interaction between the virtual and real worlds in terms of “and” instead of “or,” he said.

It makes sense to think of virtual interactions as part of a spectrum Click To TweetIn keeping with that strategy, Microsoft unveiled hardware and platform features designed to support the growth of what it now officially calls “Windows Mixed Reality.” These moves are meant to enable developers to create compelling content that will in turn drive broader consumer adoption and treats all forms of Virtual and Augmented reality as part of this broader continuum.

And as much as some sceptical pundits might dismiss this terminology as a marketing play, it is actually based on a widely cited paper on the subject published by Paul Milgram. Even back in 1994 when the paper was first published, A Taxonomy of Mixed Reality Visual Displays already had difficulties in establishing clear-cut categories that differentiated between these different types of virtual experience, and predicted that in future they would increasingly blend into one another.

What people like Kipman argue is that different headsets and different experiences will mix the physical and virtual realities to varying degrees, and that each of those experiences will fall within a certain place within the Mixed Reality spectrum. We will increasingly have more versatile hardware and content which allows users to navigate through that spectrum seamlessly, so there will be no need to worry about which type of headset you have. The best possible experience will automatically be delivered to that HMD.

Mixed Reality devices will deliver the best experience to users automatically Click To TweetThis context helps us to make sense of why Microsoft insists on calling the headsets unveiled at Build Mixed Reality as opposed to Virtual Reality – which is what most people would term the fully occluded experience they offer at this stage. Built in partnership with Acer and HP, these use the same groundbreaking inside-out tracking technology developed for the HoloLens. As of now developers can pre-order these in advance of a consumer launch scheduled for the 2017 holiday season. Additionally, Microsoft also plans on releasing a set of Mixed Reality motion controllers which – unlike systems like the HTC Vive and Oculus Rift – will not require any external physical markers, working instead with the headset’s internal motion sensors.

Microsoft's new headsets use the same inside-out technology as the HoloLens Click To TweetIn this Huffington Post article I look at the recent announcements the company has made and how that ties into their long term vision for the development of Mixed Reality Technology

A hint of how that integration across devices works was showcased during the Cirque du Soleil demo (more on that below), where an avatar was remotely beamed in to join the HoloLens-wearing people on stage. That person was able to see what they were seeing and talk to them, using one of the new fully occluded headsets. The idea is therefore for people to be able to mix and match HoloLens with headsets such as the new Acer one as developers design apps where they both can share experiences in Mixed Reality. The “shell” of the user interface would of course vary depending on the display technology, but each experience would be running on the same software. And Microsoft wants that software to be Windows 10, which is why it has chosen to focus on platforms rather than devices. Instead of getting too hung up on defining and developing for each subset of the Mixed Reality continuum, they see the advantage in taking a holistic approach.

Yet as much as the hardware tends to grab most of the attention at such events, Microsoft’s bigger play is definitely all about the platform on which such devices – and many more to come – will run. The Windows 10 Creators Update began rolling out last month to more than 500 million Windows 10 devices around the world, and the Fall Creators Update will follow later this year. It incorporates a new Fluent Design System that promises to deliver an intuitive, harmonious, responsive and inclusive cross-device experiences and interactions.

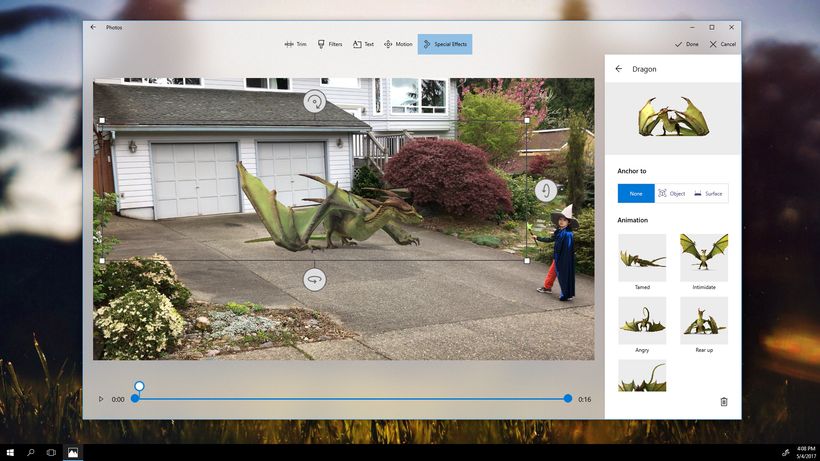

What all that blurb translates into is the idea that getting things done across all your devices should be easy, and ultimately not something you need to think about at all. The user experience should flow seamlessly between Windows, iOS, and Android devices, and as more Mixed Reality-enabled devices join that ecosystem, that will become a bigger part of that content mix. One of the most popular demos at Build – the Story Remix app – showed how that looks in practice, leveraging the Microsoft Graph to create stories with a soundtrack, theme, and cinematic transitions as well as the ability to incorporate MR elements, adding 3D objects to photos and videos.

The Story Remix app leveraged the Microsoft Graph to create stories with a soundtrack, theme, and cinematic transitions Click To Tweet

But as impressive as the Story Remix demo was, the pièce de résistance was brought to the Seattle audience courtesy of a bunch of creative Canadians. A team from Cirque du Soleil demonstrated a scenographic Mixed Reality tool developed for them by Finger Food Studios, a Microsoft HoloLens partner based near Vancouver. It enables the creation of interactive 3D blocks and shapes that can be quickly transformed into a full scale rendering of a stage. It’s designed to help creators make faster and better decisions around casting, costume, stage height and much more by giving them the ability to test out ideas at scale before even putting out a casting call.

Ryan Peterson, Finger Food’s CEO, said they managed to turn around in just three weeks, drawing upon their experience of producing large-scale holograms (having previously worked with truck manufacturers Paccar to improve the efficiency of its industrial design processes).

Yet while the manipulation and scaling of objects was good, what set this demo a notch above the rest – as befitted a keynote unveiling – was the addition of live performers, conjured up just as they would in a live Cirque du Soleil show; The contortionists and dancers gave what would have been an impressive technical demo a “One More Thing” kind of edge. This felt like the start of something big.

Later on, I asked Bernard Fouché, General Manager, Innovation, at Cirque du Soleil’s C:Lab how they got the moving holograms of the performers to look so good:

“A couple of weeks ago the Cirque just happened to be performing in Seattle, so they asked the performers to go the Microsoft campus in Redmond so we could hologram them,” he revealed.

Their overarching goal is always to use technology to support the creative process, explains Fouché. Putting together a Cirque du Soleil production is a process which takes almost two years, and they hope that by using HoloLens they will not only be able to better communicate their plans and vision with investors and partners at an earlier stage, but also avoid costly mistakes which only become apparent when seeing things in full 3-D scale. “That’s why our studio in Montreal is the exact same scale as one of our big top shows. Scale is extremely important as creative people are very visual.”

Another use case where the advantage of using Mixed Reality becomes apparent is data visualization. My demo of new Acer headsets at Build, for example, was done in collaboration with Datascape, and allowed me to quickly and easily wrap my head around complex datasets which mapped out weather conditions in such a way as to allow me to make fairly informed decisions about where to best place solar panels and wind turbines within minutes. It’s hard to argue that making this sort of thing available to every policymaker – and voter – out there wouldn’t be an incredibly valuable and powerful proposition.

Another use case where the advantage of using Mixed Reality becomes apparent is data visualization Click To Tweet

For certain kinds of data there is even more of an advantage, as is the case with Earthquake data. Since earthquakes happen just below the surface of the earth at varying depths, even looking at 3D visualization of that data on a 2D screen it is difficult to make sense of patterns. Yet the exact same data presented on a device such as the HoloLens – where you can place a globe in the middle of the room and walk around, it, instantaneously allows users to spot the position of seismic faults and gives you a distinct advantage in spotting patterns that you might otherwise miss.

According to Lili Cheng, a distinguished engineer, general manager FUSE labs, the challenge is to bring the power of vision into people’s everyday lives. She’s been working with Kipman’s team to figure out ways of combining 2D and 3D elements with AI to create meaningful mainstream experiences, including integration with Cortana.

“We know we need to design everything to work with rich voice interaction, because that’s such a big part of how you want to interact with the HoloLens,” she says.

There is a general feeling that a lot of elements – such as cloud capabilities, artificial intelligence and hardware development – are coming together much faster than predicted, and that the only limits to the experiences we’ll be able to create will be our own imagination. It feels like an exciting time to be involved in that ecosystem, a bit like the early days of the Internet or Mobile, but even bigger in a way, since it will be an evolution of those technologies rather than replacing them.

Kipman’s excitement for what Mixed Reality will achieve is apparent, even after all these years. After the keynote he wanders the show floor, trying out all the different demos, in fact while I was waiting my turn at the Viacom NEXT booth he was so enthusiastic to see their Withdrawal music video demo (which both of us found to be pretty awesome) that he inadvertently cut in front of me in the line. After he’s finished, I jokingly berate him for it and charge him a selfie, exchanging a few words in Portuguese along the way. After years living abroad, it’s always nice to see a fellow Brazilian helping to shape the future.

It’s always nice to see a fellow Brazilian helping to shape the future. Click To TweetMixed Reality: Can Microsoft Build IT? https://t.co/slUpTKOje8 via @HuffPostBlog

— Alice Bonasio (@alicebonasio) May 17, 2017

For companies looking to gain a competitive edge through technology, Tech Trends offers strategic Virtual Reality and Digital Transformation Consultancy services tailored to your brand.

Alice Bonasio is a VR and Digital Transformation Consultant and Tech Trends’ Editor in Chief. She also regularly writes for Fast Company, Ars Technica, Quartz, Wired and others. Connect with her on LinkedIn and follow @alicebonasio on Twitter.